I am Zhicheng Yang, a PhD Candidate in Large Language Model Reasoning. I work on reasoning-centric large language models, with current interests in solve-and-verify paradigms, reasoning data synthesis, and test-time scaling. My recent work focuses on advanced expert-level mathematical reasoning, verification, efficiency & readability CoT, and agentic reasoning.

My research aims to build LLM reasoning systems that can solve, critique, and verify long-horizon problems with stronger reliability and efficiency. You can find my work on Google Scholar.

🔥 News

- 2026.05: Two papers, Accordion-Thinking and Depth-Breadth Synergy, were accepted to ICML 2026.

- 2026.02: One paper was accepted to CVPR 2026.

- 2025.09: Two papers were accepted to EMNLP 2025.

- 2025.01: Co-organizing the 2nd AI4MATH Workshop @ ICML 2025.

- 2025.01: One paper was accepted to ICLR 2025.

- 2024.05: Served as challenge lead organizer for Automated Optimization Problem-Solving with Code at 1st AI4MATH Workshop @ ICML 2024.

📝 Selected Publications

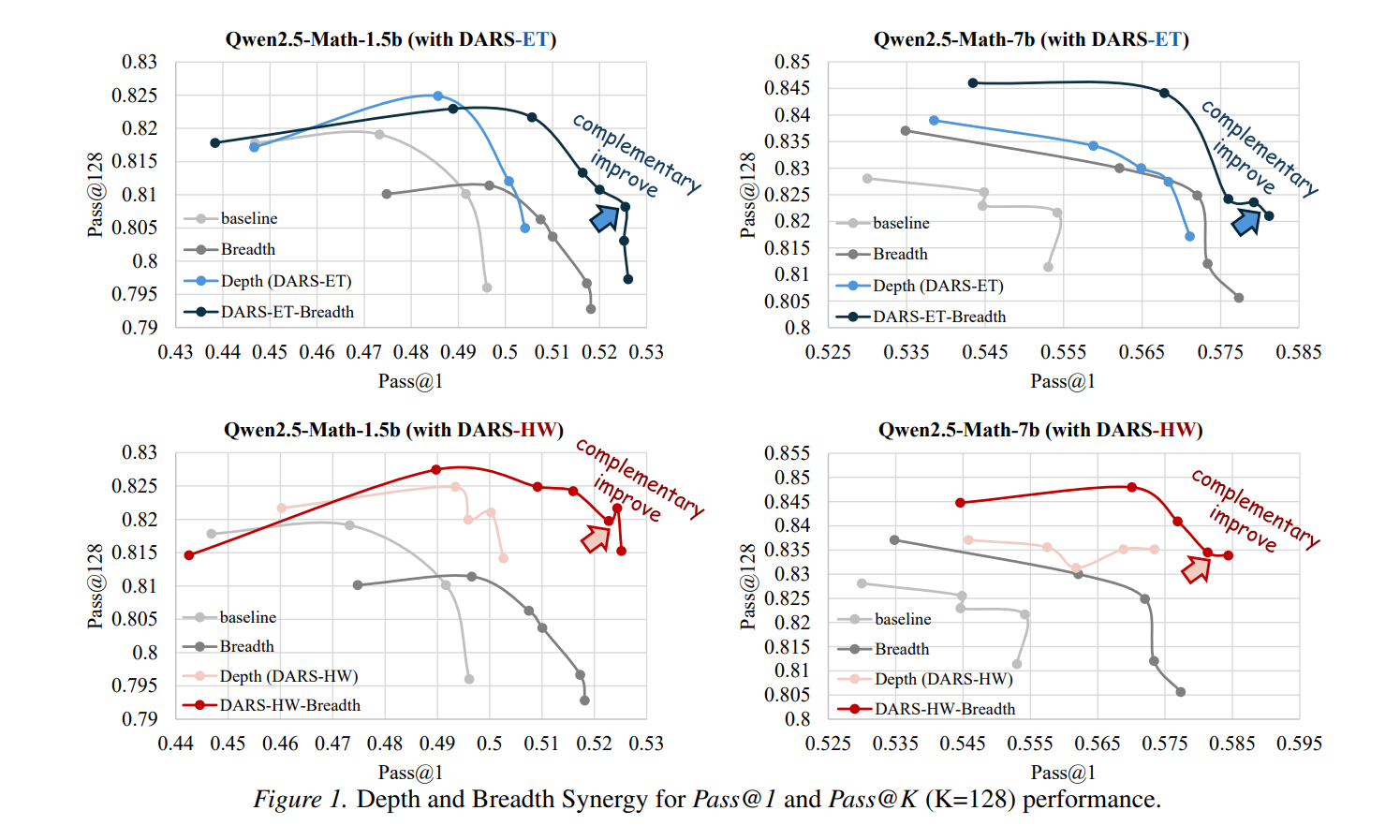

Depth-Breadth Synergy in RLVR: Unlocking LLM Reasoning Gains with Adaptive Exploration

Zhicheng Yang, Zhijiang Guo, Yinya Huang, Yongxin Wang, Dongchun Xie, Yiwei Wang, Xiaodan Liang, Jing Tang

International Conference on Machine Learning

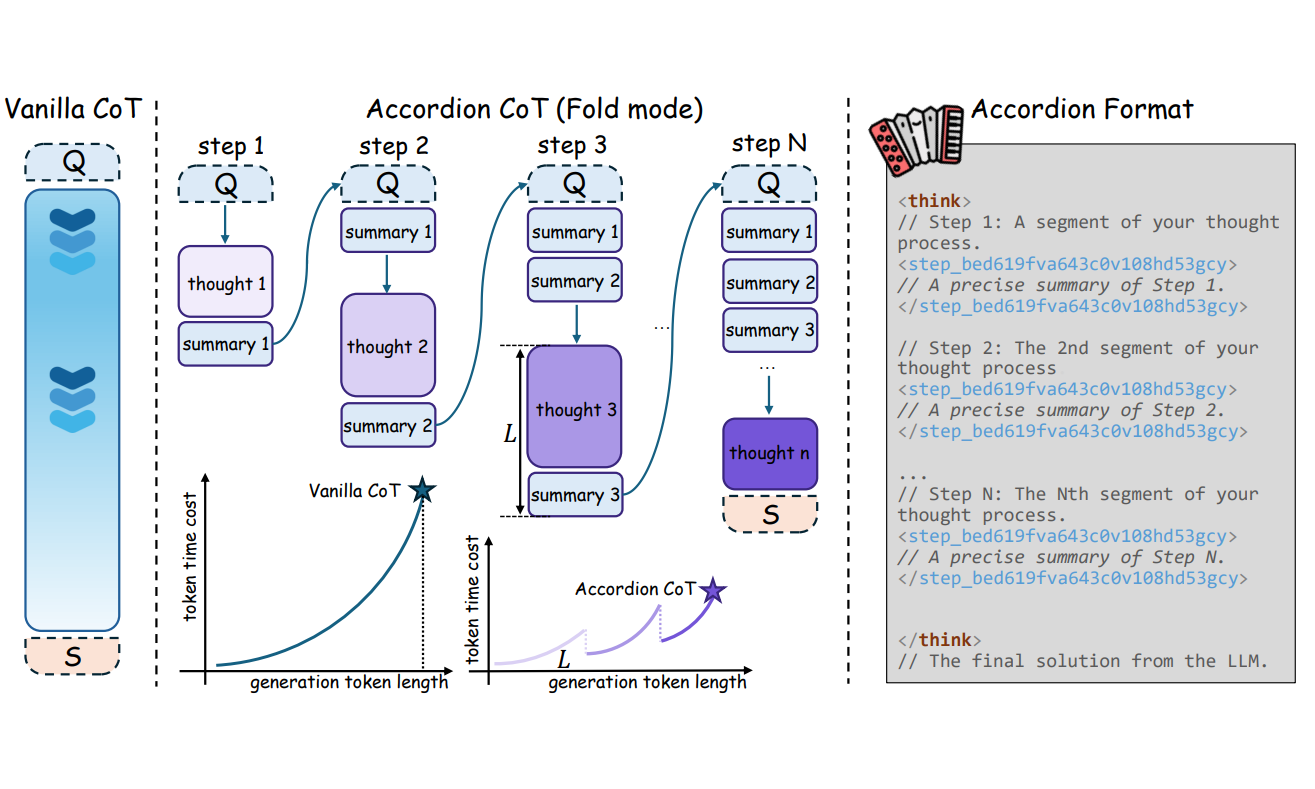

Accordion-Thinking: Self-Regulated Step Summaries for Efficient and Readable LLM Reasoning

Zhicheng Yang, Zhijiang Guo, Yinya Huang, Yongxin Wang, Wenlei Shi, Yiwei Wang, Xiaodan Liang, Jing Tang

International Conference on Machine Learning

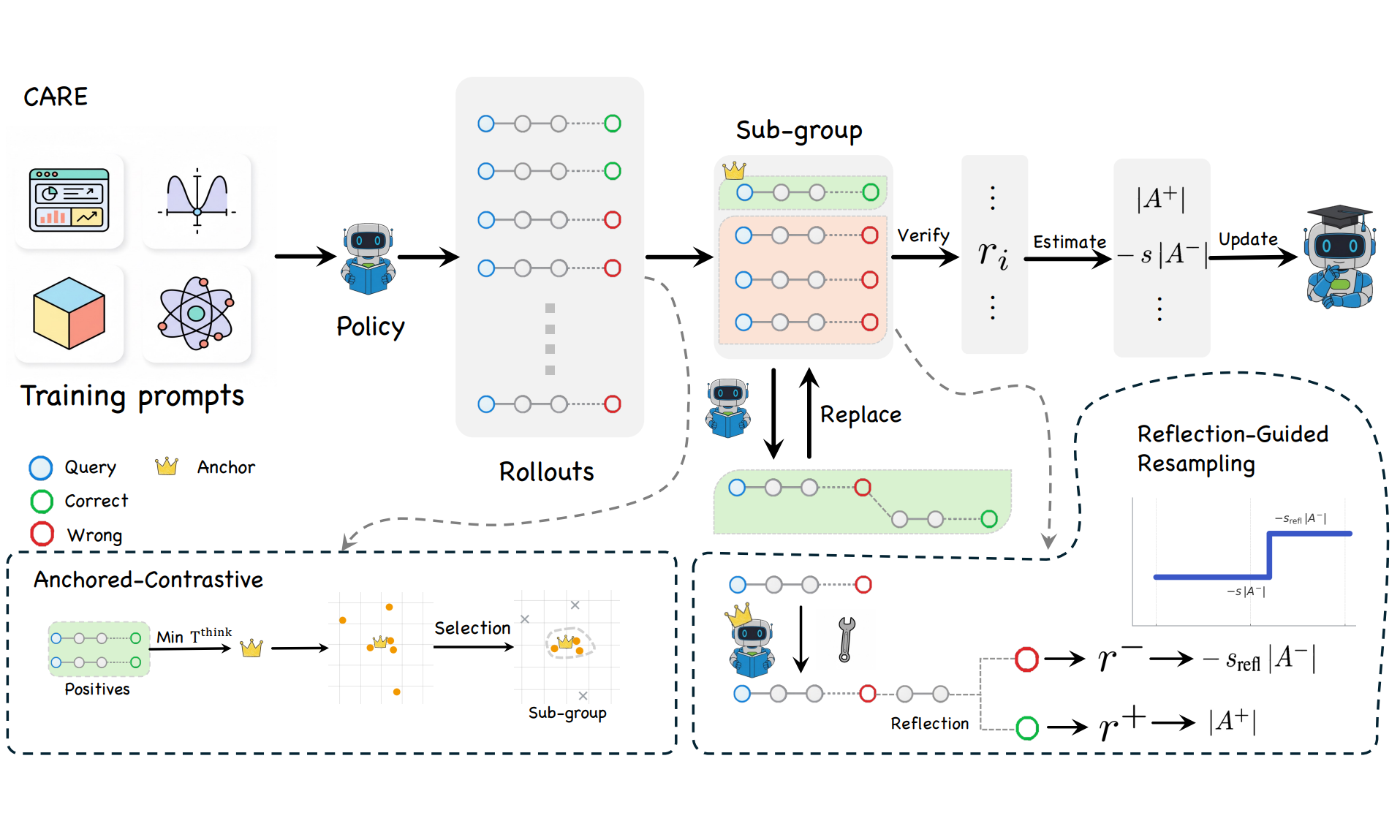

CARE What Fails: Contrastive Anchored-REflection for Verifiable Multimodal Reasoning

Yongxin Wang, Zhicheng Yang, Meng Cao, Mingfei Han, Haokun Lin, Yingying Zhu, Xiaojun Chang, Xiaodan Liang

IEEE Conference on Computer Vision and Pattern Recognition

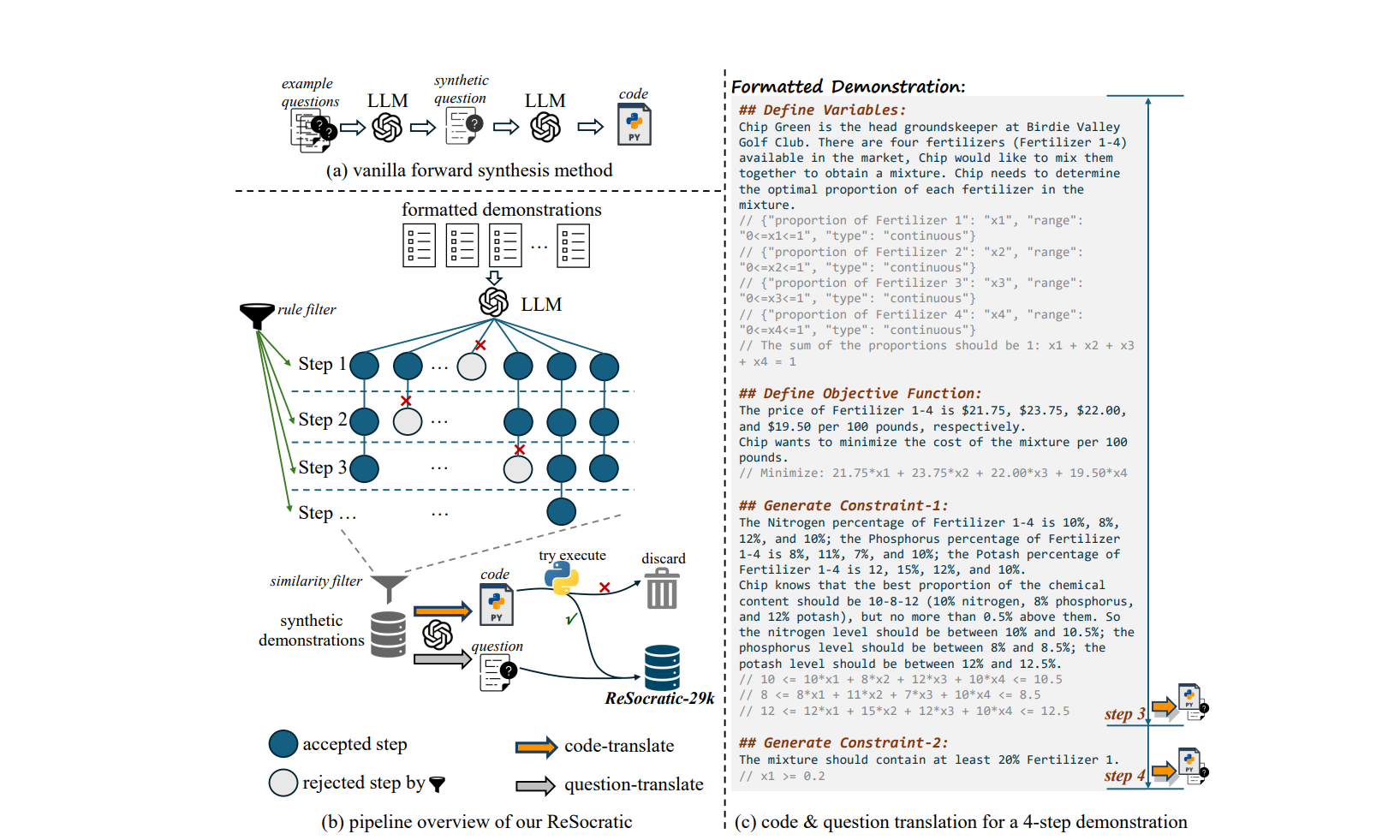

OptiBench Meets ReSocratic: Measure and Improve LLMs for Optimization Modeling

Zhicheng Yang, Yiwei Wang, Yinya Huang, Zhijiang Guo, Wei Shi, Xiongwei Han, Liang Feng, Linqi Song, Xiaodan Liang, Jing Tang

The Thirteenth International Conference on Learning Representations

- Proving Theorems Recursively, Haiming Wang, Huajian Xin, Zhengying Liu, Wenda Li, Yinya Huang, Jianqiao Lu, Zhicheng Yang, Jing Tang, Jian Yin, Zhenguo Li, Xiaodan Liang, NeurIPS 2024.

- AlignedCoT: Prompting Large Language Models via Native-Speaking Demonstrations, Zhicheng Yang, Yinya Huang, Jing Xiong, Liang Feng, Xiaodan Liang, Yiwei Wang, Jing Tang, Findings of EMNLP 2024. | Code

- CLOMO: Counterfactual Logical Modification with Large Language Models, Yinya Huang, Ruixin Hong, Hongming Zhang, Wei Shao, Zhicheng Yang, Dong Yu, Changshui Zhang, Xiaodan Liang, Linqi Song, ACL 2024.

- ATG: Benchmarking Automated Theorem Generation for Generative Language Models, Xiaohan Lin, Qingxing Cao, Yinya Huang, Zhicheng Yang, Zhengying Liu, Zhenguo Li, Xiaodan Liang, Findings of NAACL 2024.

- LogicSolver: Towards Interpretable Math Word Problem Solving with Logical Prompt-Enhanced Learning, Zhicheng Yang*, Jinghui Qin*, Jiaqi Chen, Liang Lin, Xiaodan Liang, Findings of EMNLP 2022. | Code

- Unbiased Math Word Problems Benchmark for Mitigating Solving Bias, Zhicheng Yang, Jinghui Qin, Jiaqi Chen, Xiaodan Liang, Findings of NAACL 2022. | Code

- Template-based Contrastive Distillation Pre-training for Math Word Problem Solving, Jinghui Qin*, Zhicheng Yang*, Jiaqi Chen, Xiaodan Liang, Liang Lin, IEEE Transactions on Neural Networks and Learning Systems, 2023.

🧪 Seleted Preprints

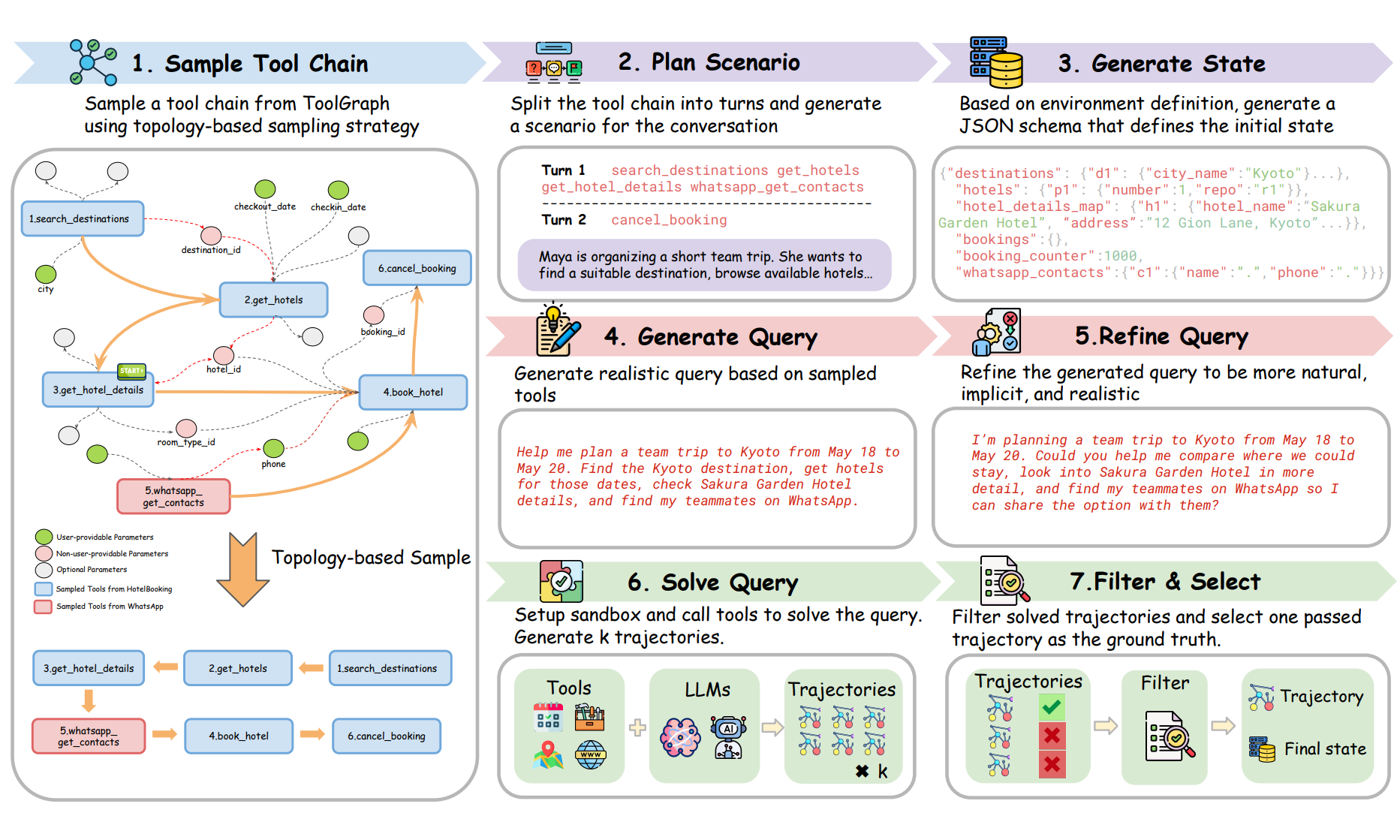

EnvFactory: Scaling Tool-Use Agents via Executable Environments Synthesis and Robust RL

Minrui Xu, Zilin Wang, Mengyi DENG, Zhiwei Li, Zhicheng Yang, Xiao Zhu, Yinhong Liu, Boyu Zhu, Baiyu Huang, Chao Chen, Heyuan Deng, Fei Mi, Lifeng Shang, Xingshan Zeng, Zhijiang Guo

arXiv preprint

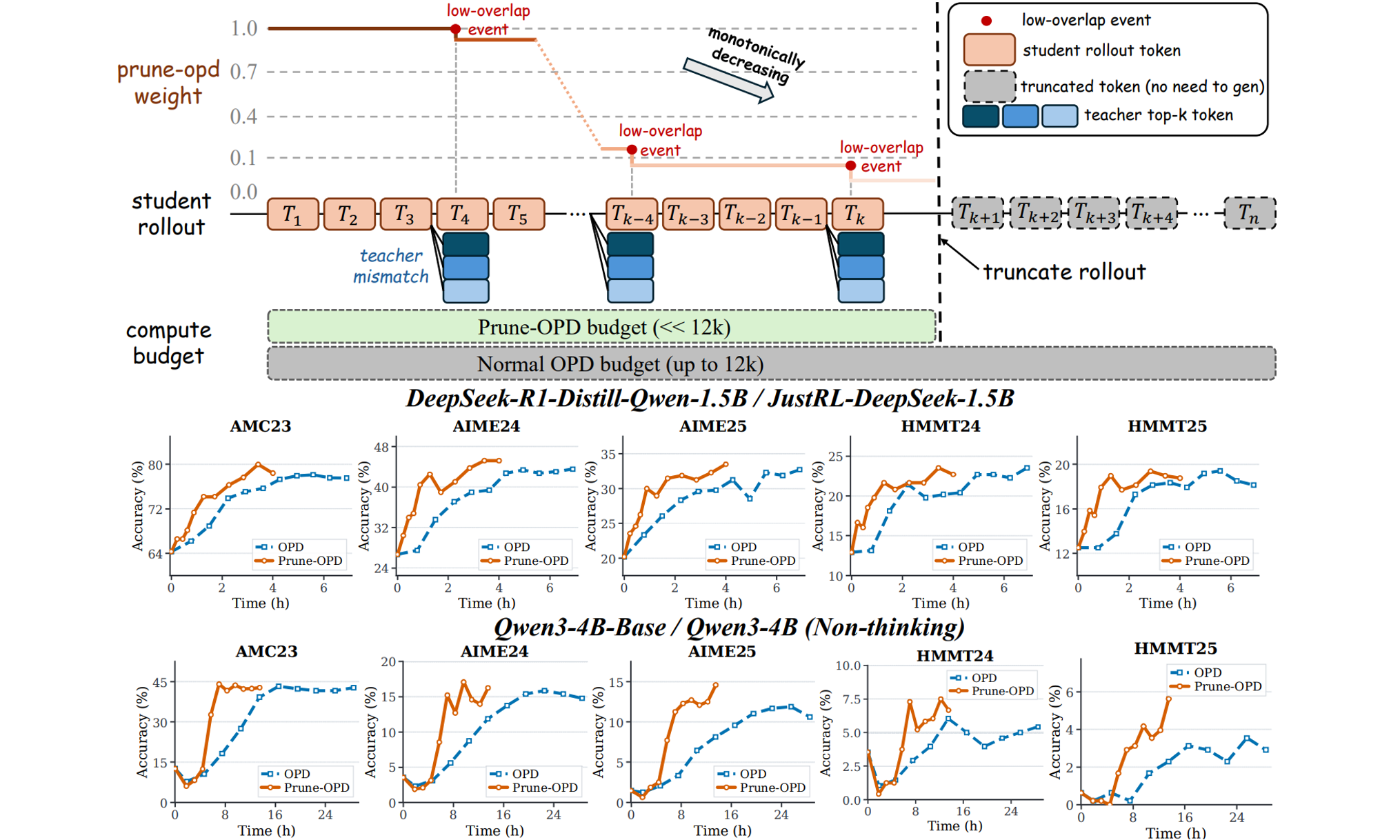

Prune-OPD: Efficient and Reliable On-Policy Distillation for Long-Horizon Reasoning

Zhicheng Yang, Zhijiang Guo, Yifan Song, Minrui Xu, Yongxin Wang, Yiwei Wang, Xiaodan Liang, Jing Tang

arXiv preprint

- TreeRPO: Tree Relative Policy Optimization, Zhicheng Yang, Zhijiang Guo, Yinya Huang, Yongxin Wang, Yiwei Wang, Xiaodan Liang, Jing Tang. | Code

🎖 Honors and Awards

- National First Prize, Contemporary Undergraduate Mathematical Contest in Modeling.

- First Prize Undergraduate Scholarship, Sun Yat-sen University.

📖 Educations

- 2020 - 2023: Master in Pattern Recognition and Intelligent Systems, Sun Yat-sen University.

- 2016 - 2020: B.Sc. in Computer Science and Technology, Sun Yat-sen University.

💬 Professional Service

- Area Chair, ACL Rolling Review (ARR), January 2026.

- Organizer of the 2nd AI for Math Workshop @ ICML 2025.

- Reviewer for ICML, NeurIPS, ICLR, ACL, EMNLP, NAACL, and TNNLS.

💻 Internships

- LLM Research Intern, ByteDance Seed.

- LLM Research Intern, Huawei Noah’s Ark Lab.

- Recommender System Intern, ByteDance Douyin.